Status Update: Big Tech at Crisis Point

On Nov. 3, 2019, Knight made a $3.5 million investment in new research to inform the national debate on internet governance and policy, including support for the UCLA Center for Critical Internet Inquiry (C2I2). Sarah T. Roberts, Ph.D., and Safiya U. Noble, Ph.D., of UCLA share details below.

A democratic and emancipatory society necessarily must consider and champion its people, and provide protection from dangerous dimensions of digital technologies. Without a critical examination of those technologies – in a way that keeps pace with their rapid development, uses, and implementations – the resulting social, political, and economic structures will be riddled with the same dangerous dimensions.

As of 2019, a series of public and increasingly worrisome events and circumstances have shaken confidence in internet technologies and platforms – and rightly so. The public has been eager to increase its own understanding and ability to actively participate in the steering of the digital technologies, digital technology platforms, and internet usage that now characterizes much of everyday life, yet they have few mechanisms that afford such intervention. Those who should act in their stead, such as legislators and other people in gatekeeping capacities, often lack clear understanding or a full picture of these technologies, their processes, and their implications – even when they are sympathetic to the public’s desire to wrest back control.

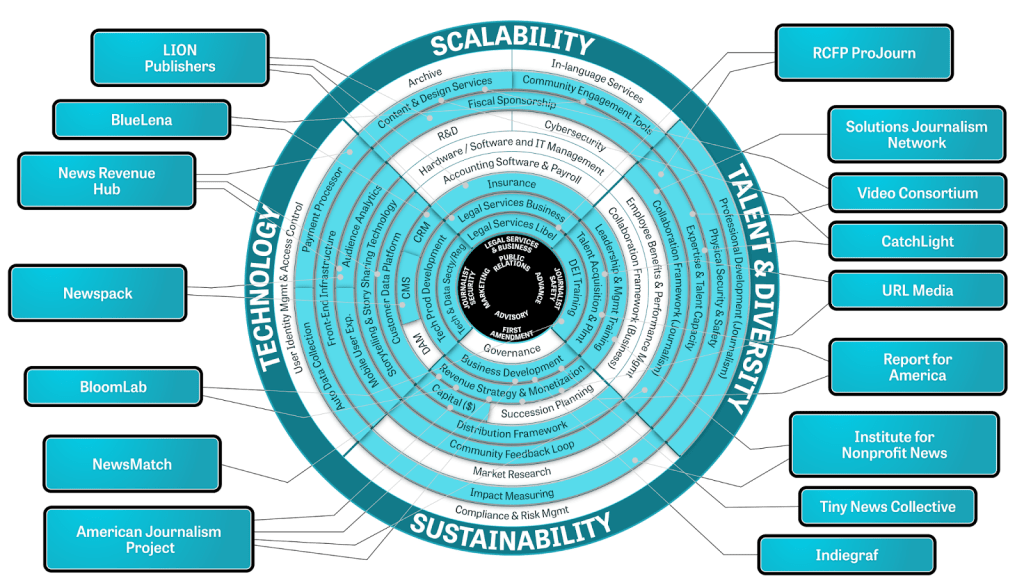

Strands of research and areas of investigation, based on the work of the UCLA Center for Critical Internet Inquiry (C2I2) co-directors and their larger scholarly communities of potential and likely collaborators include but are not limited to:

- the role of content moderation in social and digital media economies and ecosystems

- digital technologies as sites of racial and gender harassment and discrimination

- social and digital media platform consumer protections and policy recommendations

- the impact of social and digital media platforms on underrepresented, and/or vulnerable populations: children, the elderly, differently abled, socio-economically exploited, ethnic and racial groups, working-class people, women, etc.

- implications of always-on, embedded, and predictive social technologies, e.g., Internet of Things

With new support from Knight Foundation, C2I2 is working to address such uneven gaps, particularly as they impact underrepresented and vulnerable communities, workers, and publics in investigating the social impact of digital technologies (e.g., artificial intelligence; predictive technologies; internet platforms) and their related economics, business practices and policies) that increasingly dominate many aspects of our lives. The goal of the work is to understand, reveal and intervene upon harms to communities and the broader public good. C2I2 will serve as a vital bridge to close this gap in knowledge for academics, policy makers, engaged industry personnel, and the public at large by providing both original insights derived from empirical research, as well as the expert critical analysis and interpretation of those data.

Rather than reproducing ostensibly value-free studies of technology, researchers at C2I2 study dimensions of the internet and digital technologies that have negative or harmful impacts on those people and communities who are marginalized and underrepresented, and who, by design, bear the brunt of digital systems in the form of “technological redlining,” uneven and inequitable applications of technologies on their communities.

We understand that the deployment of predictive technologies toward vulnerable communities, in essence, renders them the “data disposable.” Our approach invokes critical theory and scholarship from a range of academic areas so that researchers can challenge pervasive notions of digital and internet technologies as beneficial or even value-neutral. The work we do dismantles notions of neutrality in digital systems, and advocates for transformational understandings of concerns that include privacy, surveillance, and social control. Such work is a hallmark of the Center.

Safiya U. Noble is an associate professor at UCLA in the Departments of Information Studies and African American Studies. You can follow them on Twitter at @safiyanoble.

Sarah T. Roberts is assistant professor of information studies in the Graduate School of Education and Information Studies at the University of California, Los Angeles. You can follow them on Twitter at @ubiquity75

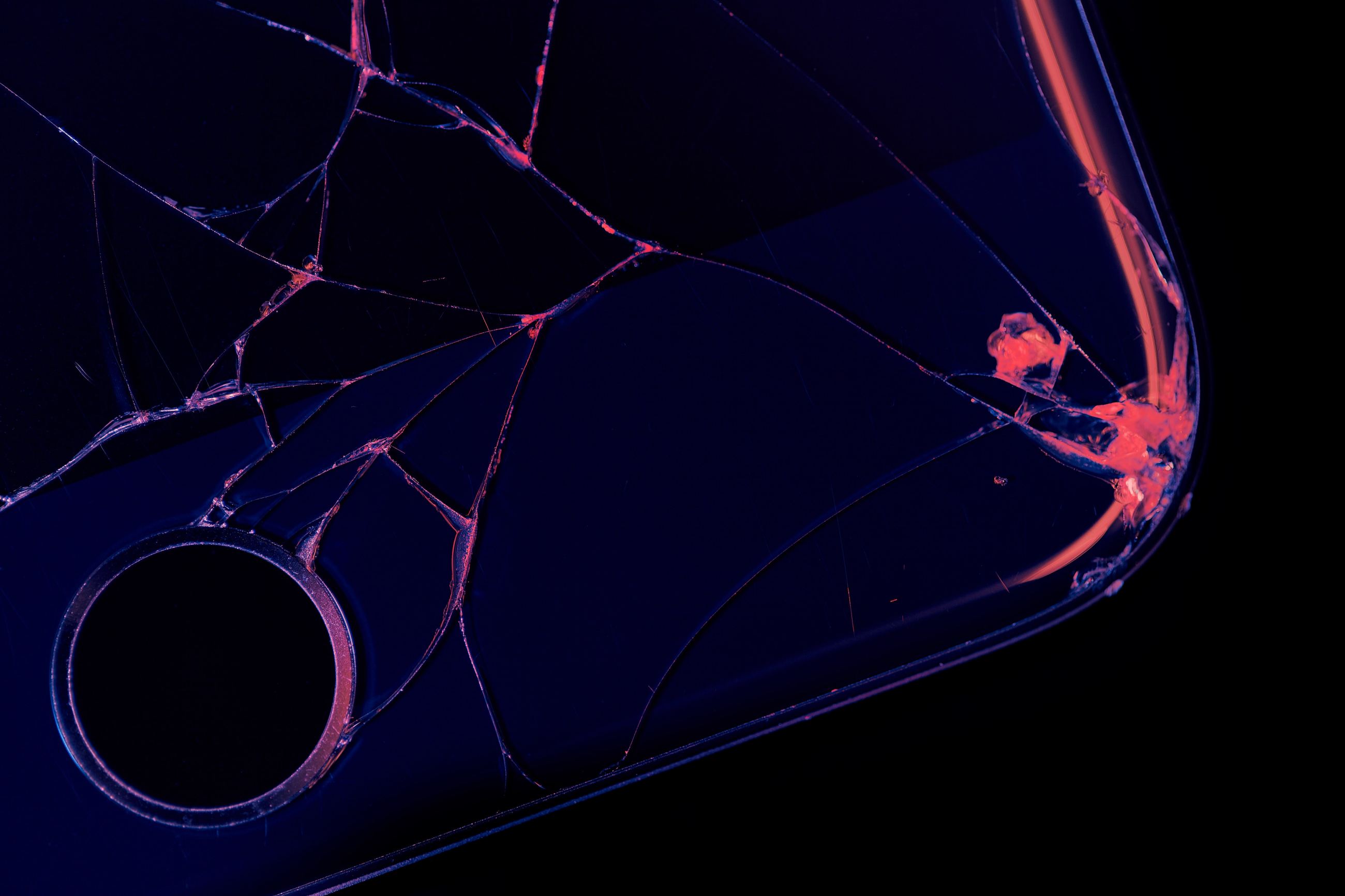

Image (top): Credit: Photo by Agê Barros on Unsplash

YOU may also be interested in…

-

Journalism / Article

-

Journalism / Press Release

-

-

Journalism / Article

Recent Content

-

Journalismarticle ·

-

Journalismarticle ·

-

Journalismarticle ·