Upgrading fact-checking

Matt Stempeck is director of civic technology for Microsoft. Below, he writes about the Knight Prototype Fund open call for ideas to address the spread of misinformation and to build trust in journalism. The open call, launched by Knight Foundation, the Democracy Fund and the Rita Allen Foundation, asks, How might we improve the flow of accurate information? Winners will share in up to $1 million, with an average award size of about $50,000. The deadline to apply is 5 p.m. ET April 3, 2017.

The online media ecosystem is a more complicated place than it was even a few years ago. The design language and distribution networks of online news have been co-opted by state-backed propagandists aiming to exert political influence as well as independent media hackers seeking to make a profit. The wild tales once peddled through chain emails are breaking through into more and more people’s social media feeds, leveraging tech companies’ content distribution systems and exploiting programmatic advertising. Their impact on the U.S. presidential election have made fact-checking, information flows and media trust into household conversations. This widespread interest is a welcome development. The success of democracy depends on the participation of an informed public, and we can’t ignore how the corrosive effects of such abuses can undermine it.

Knight Foundation has been an early and active supporter of projects to enhance fact-checking with new technologies. This time, along with the Democracy Fund and the Rita Allen Foundation, they are funding an open call for early-stage ideas to improve the flow of accurate information through the Knight Prototype Fund. As you consider whether to apply (you should), it’s worth considering what we’ve learned to date from fact-checking technology projects.

In addition to building the groundbreaking fact-checking product LazyTruth in 2012, and tracking and fighting coordinated voter suppression propaganda on the campaign trail in 2016, I recently helped Knight Foundation reflect on their investments in this space over the past eight years. Here are some of my thoughts on what we’ve seen, and where we need to go now.

CONSIDER HOW PEOPLE RESPOND TO INFORMATION AT THE COGNITIVE LEVEL

Notice how the question for the Prototype Fund challenge is phrased as “How might we improve the flow of accurate information?” We must proactively affirm that which we wish to see, rather than spend all of our energy (and more importantly, our audience’s attention spans) discussing someone else’s preferred framing and preferred narrative. George Lakoff‘s work is supremely useful here.

We must stay aware of our role in a broader media environment, where consistently repeating falsehoods—even to refute them—can make our readers more familiar with lies. Presenting a reader with more information does not inherently change their mind; it can have the opposite effect. Exposure to perspectives outside of our filter bubble doesn’t necessarily make us more empathetic to those perspectives. Check out the notes from a recent discussion led by Cathy Deng on this topic at MisInfoCon.

UNDERSTAND HOW TECHNOLOGY HAS BEEN APPLIED AT EACH STAGE OF THE FACT-CHECKING PIPELINE, TO VARYING DEGREES OF EFFECTIVENESS

Many of the fact-checkers we interviewed described fact-checking as a chronological production pipeline. If we stick to this metaphor, we can plot many of the existing fact-checking tech projects by which activity they assist in that pipeline.

Establish fact-checkers’ credibility to justify their status as arbiters.

For example, the International Fact-Checking Network’s fact-checkers’ code of principles, to which groups must adhere to be considered in Facebook’s recently launched misinformation inhibition features.

Reduce the cost of fact-checking labor by crowdsourcing the work.

In addition to organizational partnerships, which we’ll talk about in a minute, examples include:

- The Hypothes.is crowdsourced annotation network.

- The MediaBugs project to allow any reader to report errors to publications for correction, which ultimately failed to gain adoption by news outlets.

- CivilServant’s time-bound experiment with sticky posts encouraging fact-checking on Reddit.

Decrease the turnaround time between the appearance of misinformation and the arrival of the fact-check.

Many fact-checking tech projects work under a widely-held theory that the longer misinformation has to fester, the more pernicious it becomes (and the inverse, which posits that the faster a fact-check can be delivered to an audience, the shorter the shelf-life of the misinformation).

Examples include:

- Claimbuster’s promising efforts to compute a speech’s most important claims based on a historical corpus of human judgments.

- Projects to embed fact-checks contextually in-line with the original claim, through browser extensions for email (LazyTruth) and Twitter (The Washington Post), or on presidential candidates’ Medium posts (PolitiFact).

INHIBIT THE SHARING OF MISINFORMATION

Projects that equip journalists and citizens with verification toolkits to enhance media literacy and prevent sharing of false user-generated content to begin with, such as:

- Meedan’s Checkdesk project.

- Partnerships with social media platforms to identify URLs flagged as misinformation, and experimentation with techniques to inhibit their virality and develop users’ media literacy, without resorting to censorship.

- Reconfiguring social platform algorithms to disrupt echo chambers, such as Crosscloud, Escape Your Bubble (for Facebook), and Bobble (for Google Search).

REACH MORE PEOPLE

Far too few fact-checking projects successfully reach a meaningful audience. Those projects that have managed to reach a large number of readers have:

- Partnered with publishers who have their own existing audiences, as PolitiFact does with newspapers in states around the country.

- Partnered with social media platforms, which are late to the game but offer tremendous reach, such as ABC News, The Associated Press, FactCheck.org, PolitiFact and Snopes have done with Facebook.

- Focused on embedding fact-checks in existing news habits, rather than developing third-party plugins and extensions that have historically failed to reach more than a few thousand users.

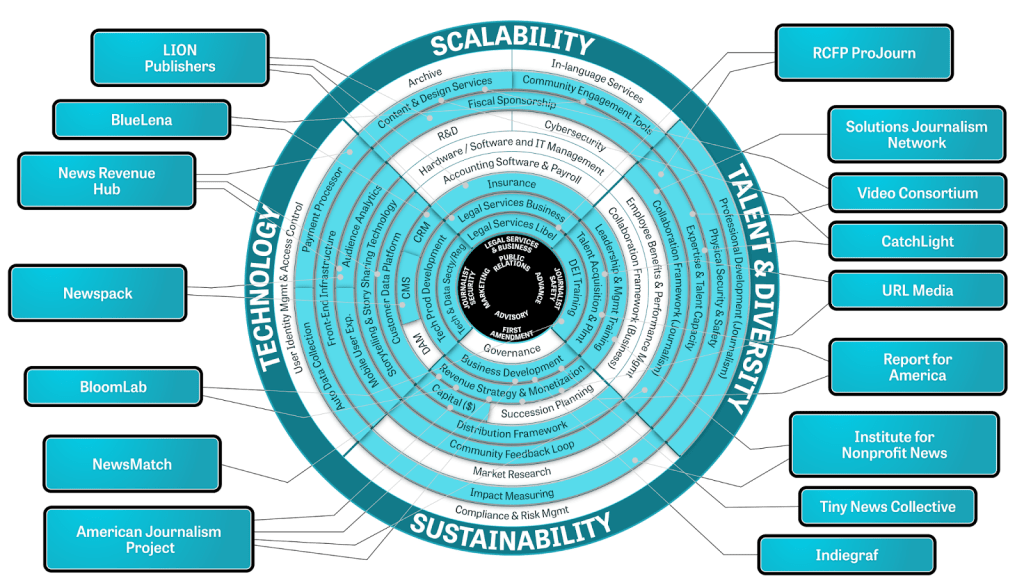

WE CAN’T AFFORD NOT TO COLLABORATE

Working together across organizations and sectors will be the best way to achieve the most impact with finite resources. Collaboration is increasingly taking place between news organizations, even competitors, as they seek to stem the tide of misinformation. Promising examples include the Electionland network of coverage spearheaded by ProPublica, PolitiFact’s impressive publisher network, including state partners, and First Draft News’s CrossCheck partnership in France.

Collaboration should also take place at the technical level, where emerging technical standards and APIs mean that developers can focus on building a meaningful user experience rather than building a database of existing fact-checks.

And lastly, because it needs stating:

‘TRUTH’ IS PHILOSOPHICALLY COMPLICATED. STEMMING THE TIDE OF MISINFORMATION IS NOT.

There have been many, many articles on the topic of fake news. Some suggest that we should refrain from playing referee, that one person’s truth is another’s lie. We don’t have time for this degree of passivity while the forces of misinformation run circles around us and misinform the 64 percent of Americans who believe that “fabricated news stories cause a great deal of confusion about the basic facts of current issues and events.” There’s nothing philosophically complicated about refuting, or better yet, defusing outright propaganda, whether it’s the tool of a foreign state or an enterprising clickbaiter. The “truth” may be nuanced, abstract, and emerge only over long periods of time. That can’t stop us from fighting for it.

Apply for the Knight Prototype Fund open call by 5 p.m. ET April 3, 2017. Learn more during open office hours from 1-2 p.m. ET on Wednesday, March 29. Join by video or dial 1-888-240-2560 and enter meeting ID 976-202-153 .

r

-

Journalism / Article

-

Technology / Article

-

Journalism / Article

-

Journalism / Article

-

Recent Content

-

Journalismarticle ·

-

Journalismarticle ·

-

Journalismarticle ·