Most Americans would believe social media misinformation warnings

Americans believe major internet companies have an obligation to warn people if items on their websites or apps contain misinformation, and most indicate they would likely believe internet company warnings about misinformation. The findings are part of a Gallup/Knight Foundation study that shows Americans remain highly concerned about the spread of misinformation, with nearly eight in 10 expressing concern misinformation could sway the outcome of the election.

The Sept. 11-24 survey of 1,269 U.S. adults who are members of Gallup’s probability-based national panel finds the following:

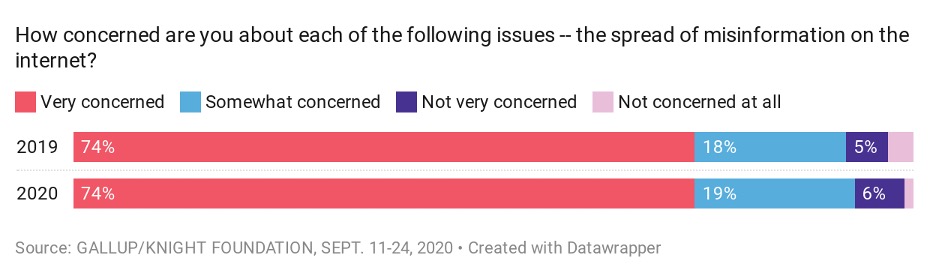

- Nearly three-quarters of Americans, 74%, are very concerned about the spread of misinformation on the internet, a stable percentage compared to the prior measurement in December.

- Majorities of all party groups are very concerned about the spread of misinformation, but more Democrats (84%) than independents (72%) and Republicans (62%) express this high degree of concern.

Americans still report substantial misinformation in news items they see:

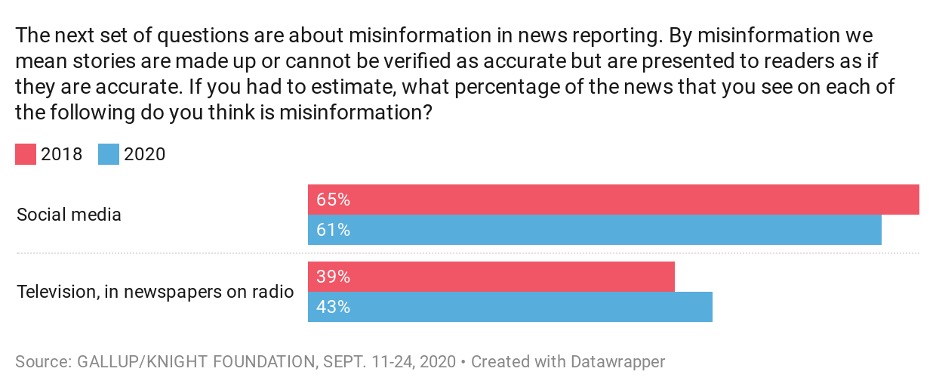

- They estimate that 43% of the news they see on television, in newspapers or hear on the radio is misinformation, compared with 39% when the question was last asked in 2018.

- Americans estimate that a higher proportion of news items they see on social media — 61% — contains misinformation, compared with an estimate of 65% from 2018.

Neither change is statistically meaningful.

Republicans estimate they see a higher proportion of misinformation on both traditional news platforms and social media platforms than do Democrats, but there are much wider party gaps for traditional news (59% versus 29%) than social media (68% versus 53%).

- Although Americans’ estimates of the prevalence of misinformation, and their concern about its spread, are stable compared with the past, when asked to say whether misinformation poses a greater threat to the U.S. than a year ago, most say it does. Specifically, 73% perceive misinformation is a greater threat than it was last year, while 24% believe misinformation poses a similar threat and 2% a lesser threat.

- These attitudes are stable even as major internet platforms like Facebook, Twitter and Google have recently taken concrete steps to address the problems posed by misinformation, including increased labeling, more actively moderating health and election-related content, and placing limits on political ads.

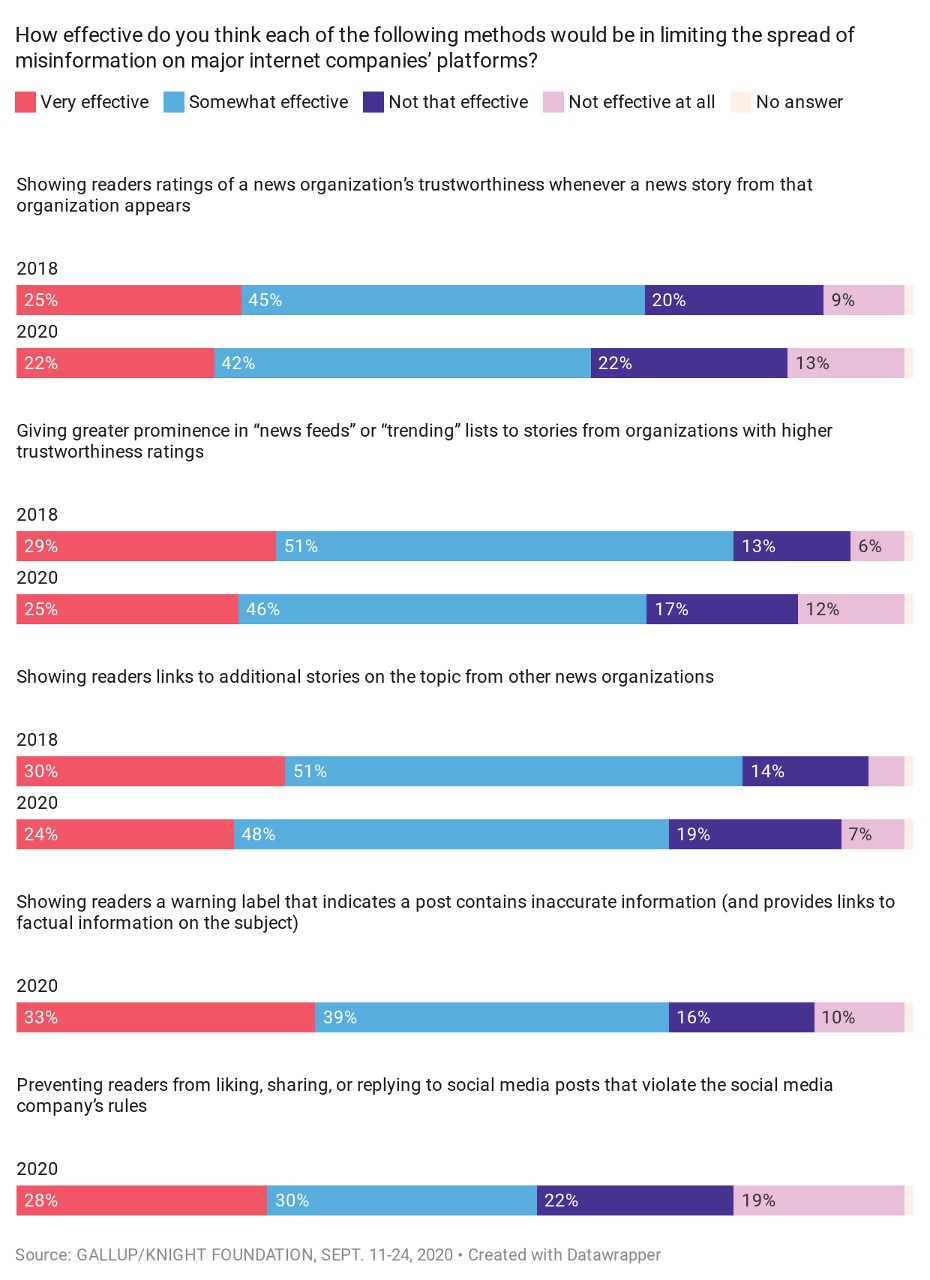

- Majorities of Americans regard each of five possible actions internet companies could take to counter misinformation as being at least somewhat effective, though only as many as one in three say they would be “very effective.” Specifically, 33% say showing readers a warning label that indicates a post contains misinformation would be very effective. Twenty-eight percent say the same about preventing readers from liking, sharing or replying to social media posts that violate the company’s rules.

Gallup and Knight asked about three of these approaches in 2018 – showing news organization trustworthiness ratings, giving higher priority in news feeds to trustworthy organizations, and providing links to additional stories on news topics. On all three, fewer Americans today believe those approaches are effective than did so two years ago.

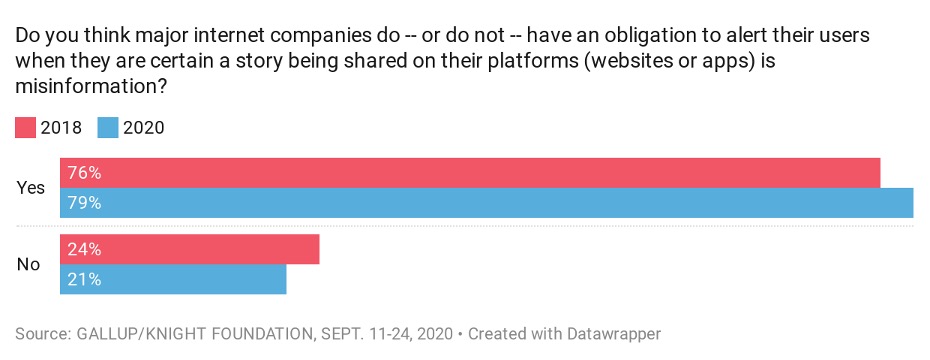

- Americans widely believe that major internet companies have an obligation to alert their users when a story shared on their website or apps is misinformation – 79% say they have such an obligation, while 21% disagree. These attitudes are stable when compared with what they were in 2018.

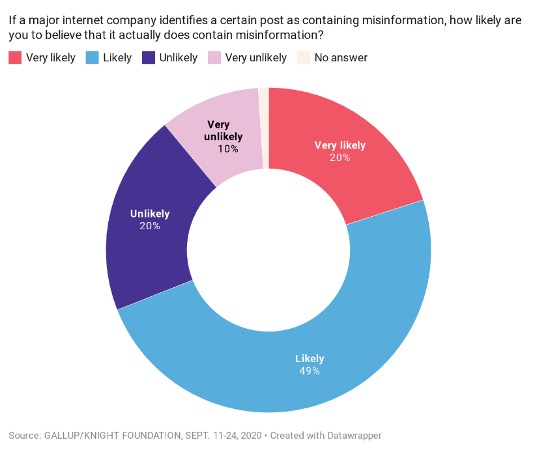

- If major internet companies flagged items appearing on their websites or apps as containing misinformation, 69% of U.S. adults say they would be “very likely” (20%) or “likely” (49%) to believe that the post actually did contain misinformation.

Democrats (93%) and independents (70%) would be inclined to believe a warning about an item containing misinformation, but Republicans would be skeptical – 37% say they would be “likely” or “very likely” to believe the warning, while 62% say they are “unlikely” or “very unlikely” to do so.

- Americans are not averse to the government having a role in addressing the problem. Nearly half, 47%, say they would like the government to have a major role in addressing the spread of misinformation on the internet. Another 33% would like the government to have a minor role, while 19% prefer no government involvement. These attitudes are unchanged from 2018.

Sixty-three percent of Democrats, 39% of independents and 35% of Republicans say they would favor a major government role in trying to address the spread of misinformation.

The survey did not measure Americans’ awareness of major internet companies’ recent efforts to combat misinformation. Even if Americans are aware of these increased efforts, it has done little to ease their concern about the spread of misinformation, to see it as less prevalent in the information ecosystem, or to see the problem as becoming less of a threat than in the past.

While the public sees some benefit to various approaches internet companies have tried, fewer do so than in 2018, and only as many one-third regard any of these as very effective methods to halt misinformation. Americans do not oppose government involvement to help solve the problem but are largely ambivalent about it.

An informed public is crucial to a healthy democracy. And while technological advances have made information more accessible to citizens, it has also done the same for misinformation. That puts a significant burden on citizens to discern what is truth from what is fiction, particularly when some of the key sources people rely on for information may help spread misinformation.

For a deeper look at Americans’ viewpoints on misinformation and technology policy, view Gallup and Knight’s previous research here.

Image (top) by Maksim Goncharenok on Pexels